90% of Your Splunk Data Is Helping Attackers, Not Analysts

Most Splunk environments contain over 90% data that's written once and read never.

This isn't just a budget problem. Every GB of unused data actively undermines your security posture:

- Critical alerts get buried in routine noise that nobody needs

- Investigations take longer as analysts wade through irrelevant events

- Intrusions hide more easily in environments with high baseline noise

- Detection rules lose precision because they can't distinguish signal from noise

- And you're paying for all of it

Teams aren’t hoarders because they want to be. The problem is that they can't see what to reduce without breaking detection or losing visibility.

Why Teams Struggle to Optimize Splunk Data

Most Splunk environments evolve organically over years. New integrations get added quickly, sourcetypes multiply, and indexes grow unevenly.

Over time, you inherit a data footprint that's impossible to reason about:

- Which indexes are actually driving your volume?

- Which sourcetypes represent the bulk of ingestion?

- Which data sources could be filtered, sampled, or optimized?

- What's high-value security data versus operational noise?

Without visibility into these questions, data reduction becomes a guessing game. Teams are forced to choose between reducing aggressively and hoping nothing breaks, or leaving everything untouched because the risk feels too high.

Neither is sustainable.

What You Actually Need to See

To make informed decisions about Splunk optimization, you need to understand:

Relative volume by data source – Which indexes and sourcetypes dominate your footprint? A small number of sources often drive the majority of volume.

Reduction potential – Based on data source type and integration method, what percentage of data could realistically be filtered or optimized without impacting visibility?

Some of this information exists in Splunk, but it's scattered across license usage data, index statistics, and sourcetype metadata. Aggregating it into a coherent view—especially one that identifies specific optimization opportunities—requires effort.

A Clear View of Where Your Data Comes From

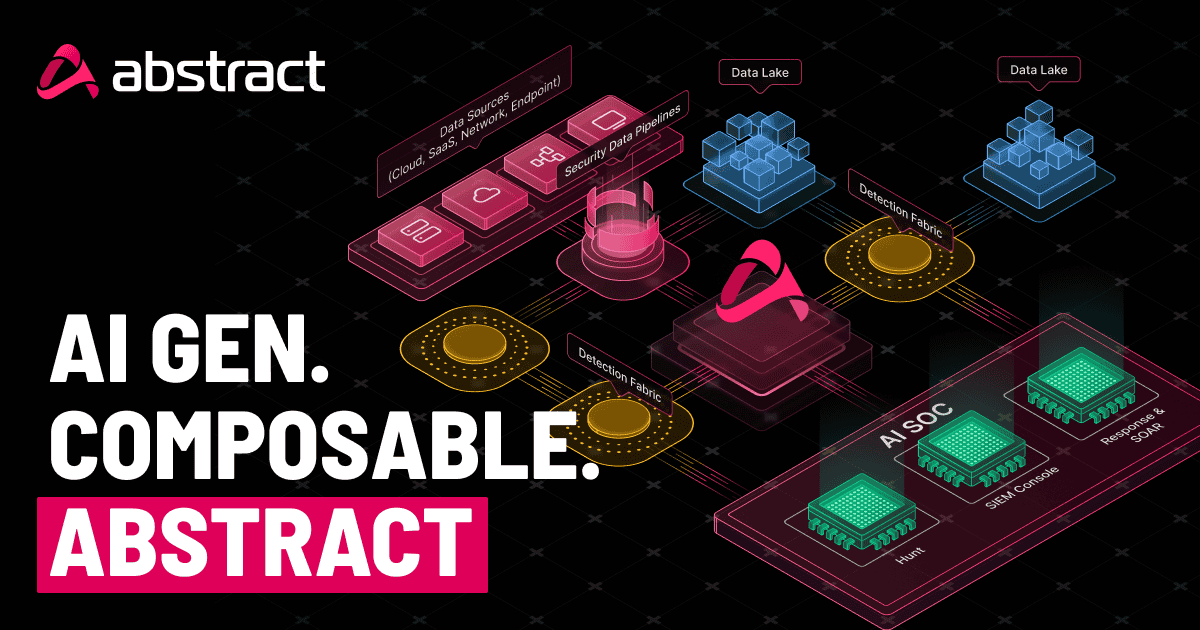

We built a free Splunk app that does this analysis for you.

It installs directly into your Splunk environment, analyzes your indexes and sourcetypes, and produces a visual dashboard showing:

- Which data sources dominate your footprint – relative volume by index and sourcetype

- Where optimization opportunities exist – projected reduction potential based on integration type

- Before and after projections – visualize the impact of potential changes

- Volume distribution – pie charts and comparisons showing how data is spread across your environment

The app uses pattern-based analysis from hundreds of Splunk environments we've optimized. For example:

- HTTP Event Collector sources often contain 30-40% of data that can be filtered without impacting security visibility (health checks, verbose debug logs, duplicate events)

- Generic REST API integrations frequently include operational data that's never queried for security purposes

- Verbose application logs typically have significant reduction potential through sampling or filtering

The app matches your sourcetypes to these patterns and calculates estimated reduction percentages specific to your environment.

How It Works

The app analyzes your Splunk environment to:

- Measure relative data volumes by index and sourcetype

- Identify integration patterns by matching sourcetypes to known ingestion methods (HTTP Event Collector, REST APIs, syslog, etc.)

- Calculate reduction estimates based on common optimization opportunities for each integration type

- Project before/after sizing so you can see the potential impact

Important: The app measures relative data volume per source—not absolute licensing costs. Splunk environments can be licensed on ingest size, disk size, or compute usage, and compression settings affect these differently. What matters is the comparative view: understanding which data sources drive your footprint and where optimization makes sense, regardless of your specific licensing model.

Designed for Analysis, Not Automation

The app doesn't change your data or enforce policies. It's a diagnostic tool—showing you where your volume comes from and where reduction opportunities exist.

What you do with that information is up to you. Some teams use it to:

- Identify unused integrations that can be turned off entirely

- Find verbose data sources that can be filtered or sampled

- Prioritize which sources to investigate first based on volume contribution

- Build a business case for data optimization projects with concrete numbers

The reduction estimates are based on patterns we've observed across Splunk environments. The actual reduction possible in your specific environment depends on your use cases, compliance requirements, and data value—but at least you'll know where to look.

Get Started

The app is free and installs directly into your Splunk environment. No data leaves your system—all analysis happens within your existing Splunk instance.

Request the Splunk Data Optimization Analysis App

For teams that can't install apps directly, we can conduct the same analysis using exported data from your environment. Click here to reach an Abstract field engineer.

Most Splunk environments are paying for data they don't need while that same data obscures the signals that matter. The first step to fixing it is seeing where your volume actually comes from.

ABSTRACTED

We would love you to be a part of the journey, lets grab a coffee, have a chat, and set up a demo!

Your friends at Abstract AKA one of the most fun teams in cyber ;)

.avif)

Your submission has been received.